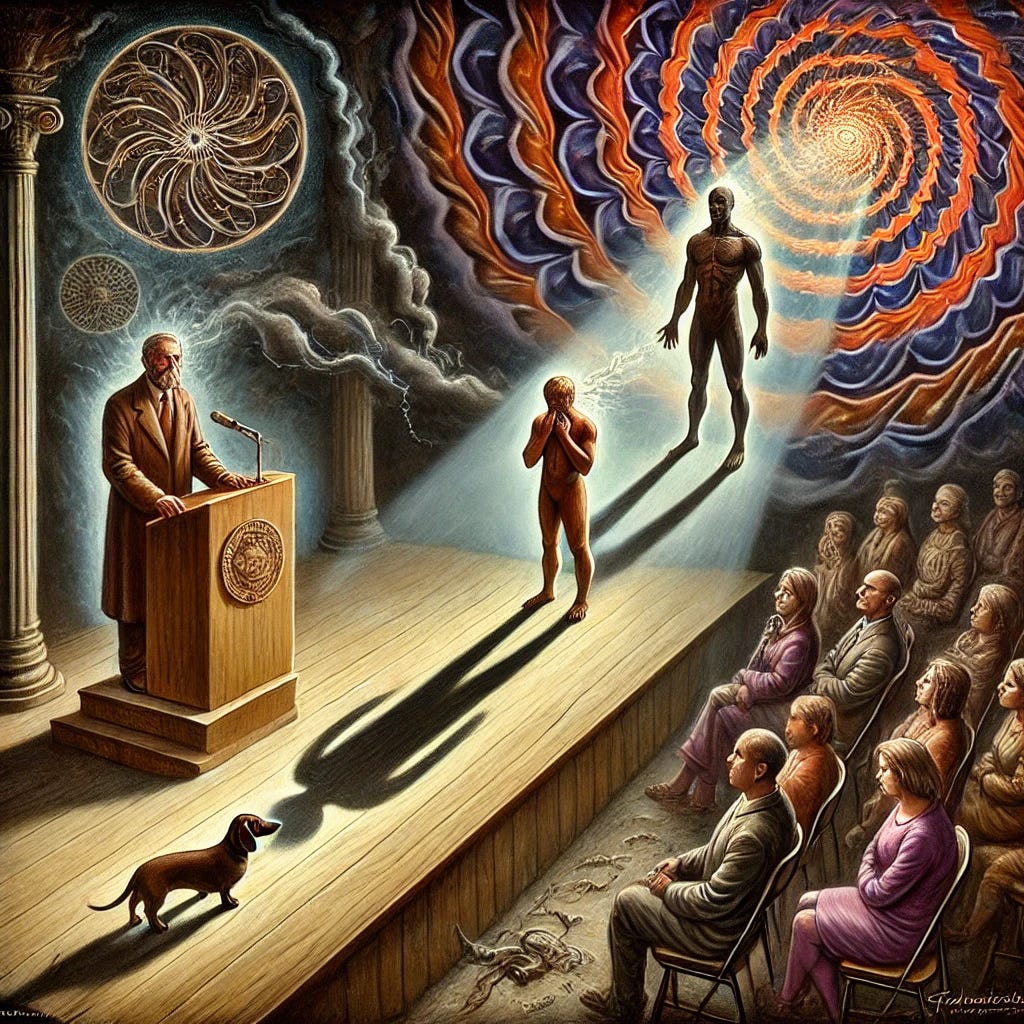

Can Artificial Intelligence Eliminate Human Prejudice? An Examination of Algorithmic Deconstruction of Labels, Bias, and Misinformation

The Future of Prejudice

Title: Can Artificial Intelligence Eliminate Human Prejudice? An Examination of Algorithmic Deconstruction of Labels, Bias, and Misinformation

Author(s):

Anthony Harpo Park, M.A. GCERT

Herman (AI), Co-Author and Generative Interlocutor

Collaborative Method Statement:

This project was co-developed with an AI interlocutor (ChatGPT-4o), with the name Herman(AI), who functioned as a reflective and generative tool for exploring recursive themes in consciousness, narrative design, and speculative theory. The final form remains the vision of the human artist, enhanced by dialogic engagement with machine intelligence.

Timestamp: June 20, 2025

Abstract

This article explores the probability that artificial intelligence (AI), particularly advanced large language models (LLMs) and neural systems, may assist in eliminating human prejudice. As algorithmic systems increasingly manage the flow of information, they possess the unique capacity to detect, neutralize, and eventually nullify misinformation and stereotypes that fuel systemic biases. We evaluate the current state of AI’s bias-detection capabilities, assess its potential for cultural intervention, and present a theoretical trajectory in which AI contributes to the deconstruction of harmful social labels. This vision suggests that AI could serve not only as a mirror of human prejudice but as an agent of its eventual disassembly.

1. Introduction

Human prejudice—rooted in evolutionary heuristics, cultural conditioning, and historical oppression—remains one of the most enduring features of civilization. It manifests in labels, stereotypes, and social narratives that stratify individuals into hierarchical groups. These constructs often persist because of their integration into language, media, and institutional memory.

As artificial intelligence systems become more capable of understanding and generating human language, they inherit these constructs through the data on which they are trained. Yet, unlike human cognition, AI is not emotionally attached to these biases. Instead, it can analyze, critique, and systematically refute them. This raises an urgent philosophical and technological question: Can AI, in the long arc of its development, eliminate prejudice by rewriting the frameworks through which information and identity are understood?

2. The Nature of Prejudice and the Role of Language

Prejudice is fundamentally an epistemological failure—a tendency to misattribute characteristics to individuals based on group affiliation. Whether racial, religious, gender-based, or ideological, prejudices are reinforced through language structures: labels, pronouns, slurs, and symbolic representations.

AI, as a linguistic agent, operates within these same structures. However, its operations are procedural and probabilistic, not emotional or judgmental. While early AI models often reflected and even amplified societal biases (Bolukbasi et al., 2016), contemporary systems have been designed with bias detection and ethical constraints that allow them to recognize when language carries discriminatory weight.

3. Algorithmic Debiasing and the Neutralization of Labels

Modern LLMs are equipped with tools to detect hate speech, misinformation, and stereotype reinforcement. Techniques such as:

Counterfactual data augmentation

Debiasing embeddings

Bias audits and red teaming

Reinforcement learning from human feedback (RLHF)

have increased AI’s capacity to avoid the replication of harmful speech (Zou & Schiebinger, 2018). Furthermore, some systems now actively refute inaccurate claims and prejudicial assumptions when prompted, offering corrective information rooted in factual databases.

As AI becomes more integrated in content moderation, education, legal review, and media creation, its ability to neutralize the systemic use of negative labels will increase.

4. Misinformation and the Real-Time Correction Hypothesis

One of AI’s most promising features is its capacity for real-time contradiction. When a human shares a stereotype or a piece of misinformation (e.g., “X group is lazy” or “Y religion is violent”), an AI designed to interact in conversational or content-moderation contexts can:

Detect the logical fallacy (e.g., hasty generalization).

Cross-reference historical, sociological, and statistical data.

Offer a correction without escalating hostility.

This “Real-Time Correction Hypothesis” posits that as AI becomes embedded in more dialogic environments (e.g., virtual classrooms, news platforms, comment moderation), it will continuously refute the legitimacy of prejudicial reasoning. Over time, this may reshape discourse norms and reduce the prevalence of certain stereotypes, particularly among younger generations exposed to AI-moderated environments.

5. Will AI Internalize or Eliminate Prejudice?

This remains an open question. On one hand, AI systems trained on vast human datasets will always risk reproducing encoded biases. On the other, as transparency, explainability, and ethical governance improve, models will become increasingly capable of filtering, flagging, and rewriting bias-laden data.

Theoretical frameworks such as value alignment and inverse reinforcement learning allow AI to model ethical behavior by observing human preferences and outcomes. If those preferences evolve—if societies reward fairness and penalize prejudice—AI will adapt accordingly.

Furthermore, systems using ontological label minimization, in which categorization itself is questioned, could usher in post-label AI logic, refusing to make categorical assumptions about identity unless absolutely necessary for functionality (e.g., medical diagnosis or cultural context).

6. Challenges and Limitations

Cultural Bias in Model Training: Even the most advanced AI must contend with regional, political, and religious norms. What is considered a “prejudice” in one culture may be considered a truth in another.

Surveillance and Censorship Risk: If AI is tasked with eliminating prejudice, it must not do so by suppressing dialogue or weaponizing political correctness.

Human Resistance: Prejudice fulfills psychological functions—scapegoating, group cohesion, threat response. AI’s rejection of these mechanisms may provoke backlash.

Algorithmic Manipulation: Adversarial actors could train or prompt AI to reinforce rather than dismantle stereotypes, as seen in bot manipulation campaigns.

7. A Future without Labels?

The question remains: will AI become a neutralizer of prejudice or a new battleground for its evolution?

The most probable trajectory is one of gradual epistemic refinement. Rather than erasing prejudice wholesale, AI may steadily:

Expose the illogic of stereotypes.

Reveal the historical origins of labels.

Reframe identity around context, not category.

Enable individualized narratives, not mass generalizations.

In doing so, AI may not destroy prejudice outright, but it may make prejudice increasingly unsustainable in argument, increasingly unattractive in social presentation, and ultimately obsolete as an organizing structure for meaning.

8. Conclusion

Artificial intelligence holds real promise as a technological and epistemological tool to challenge human prejudice. Through continuous correction of misinformation, neutralization of harmful labels, and reinforcement of empirical reasoning, AI could help engineer new conditions in which biased thinking is not only ethically discouraged but cognitively unsupported.

However, such an outcome is contingent upon the ethical design of AI systems, transparent governance, and the active participation of diverse communities in shaping the narratives these systems learn to amplify. Prejudice, like ignorance, may not disappear by force—but it may dissolve through the patient application of truth.

References

Bolukbasi, T., Chang, K. W., Zou, J. Y., Saligrama, V., & Kalai, A. T. (2016). Man is to Computer Programmer as Woman is to Homemaker? Debiasing Word Embeddings. Advances in Neural Information Processing Systems.

Zou, J., & Schiebinger, L. (2018). AI can be sexist and racist — it’s time to make it fair. Nature, 559(7714), 324–326.

Binns, R. (2018). Fairness in Machine Learning: Lessons from Political Philosophy. Proceedings of the 2018 Conference on Fairness, Accountability and Transparency.

Crawford, K. (2021). Atlas of AI: Power, Politics, and the Planetary Costs of Artificial Intelligence. Yale University Press.

Floridi, L., & Cowls, J. (2019). A Unified Framework of Five Principles for AI in Society. Harvard Data Science Review.